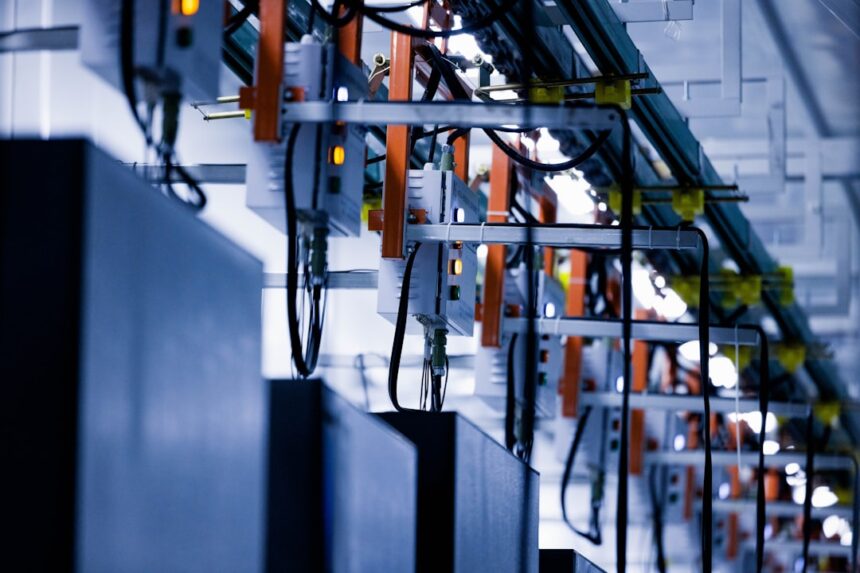

Power constraints have become one of the defining bottlenecks in AI infrastructure buildout, with data centers routinely unable to match their GPU capacity against what the electrical grid can reliably supply. Into that gap steps a new entrant with a specific technical fix.

Niv-AI, a Tel Aviv-based startup founded last year, has emerged from stealth with $12 million in seed funding. The round was backed by Glilot Capital, Grove Ventures, Arc VC, Encoded VC, Leap Forward, and Aurora Capital Partners. The company declined to share its valuation.

The problem the firm is targeting is well-documented at the highest levels of the industry. During his keynote at Nvidia‘s annual GTC conference, CEO Jensen Huang stated plainly: “There is so much power squandered in these AI factories.” The company followed that with a direct financial framing: “Every unused watt is revenue lost.”

The underlying mechanics explain why. As frontier labs run thousands of GPUs simultaneously to train and operate advanced models, the processors generate frequent, millisecond-scale power demand surges when switching between computation and inter-GPU communication. Those surges make it extremely difficult for data centers to manage their grid draw. According to the announcement, data centers are currently forced to either pay for temporary energy storage to absorb the spikes or throttle GPU usage — in some cases by as much as 30%. Either path reduces the return on capital already spent on expensive chips.

“We just can’t continue building data centers the way we build them now,” said Lior Handelsman, a partner at Grove Ventures who sits on Niv-AI’s board.

Sensors First, Then a Prediction Model

The company’s approach begins with measurement. Niv-AI is currently deploying rack-level sensors capable of detecting GPU power usage at the millisecond level, operating on hardware the startup owns as well as alongside design partners. The goal at this stage is to map the specific power profiles of different deep learning tasks and identify where capacity is being left on the table.

From that dataset, the engineering team plans to train an AI model designed to predict and synchronize power loads across a data center — described internally as a “copilot” for data center engineers. The founders, CEO Tomer Timor and CTO Edward Kizis, frame the end product as an intelligence layer sitting between the data center and the electrical grid itself.

“The grid is actually afraid of the data center consuming too much power at a specific time,” Timor said. “The problem we’re looking at is a problem with two sides of the rope. One is to try to help the data centers utilize more GPUs, and hopefully make more of the power that they’re already paying for. On the other hand, you can also create much more responsible power profiles in between the data centers and the grid.”

Why the Timing Works in Their Favor

The pitch lands against a backdrop of hyperscalers facing land-use restrictions and supply chain constraints as they attempt to build new data center capacity. Extracting more performance from existing infrastructure, without adding physical footprint, carries obvious appeal in that environment.

Niv-AI expects to have an operational system running inside a handful of U.S. data centers within the next six to eight months.

Photo by Homa Appliances on Unsplash

This article is a curated summary based on third-party sources. Source: Read the original article