California’s AB 316, which took effect January 1, 2026, removed legal cover for enterprises claiming an AI system acted without human approval — arriving just as agentic AI crossed a threshold that made that defense more tempting than ever.

Between December 2025 and January 2026, no-code agent tools from multiple vendors entered the market alongside OpenClaw, an open-source personal agent published on GitHub. According to the report, generative AI effectively moved from a slow, human-prompted chatbot model into autonomous operation across complex enterprise workflows. The analogy used is direct: the technology stopped crawling and broke into a sprint, while governance frameworks were still designed for a child that couldn’t yet walk.

The Accountability Gap

Previous AI governance focused on model output — drift, alignment, data exfiltration, poisoning — with humans reviewing decisions before they became consequential. Loan approvals, job applications, and similar high-stakes outputs had people in the loop by design. Autonomous agents remove much of that buffer.

The stated goal is to run business operations at machine pace. But the liability standard, the report argues, has not changed: the risk profile of a machine-operated workflow should be no worse than a human-operated one. As one industry summary cited in the piece puts it, “AI does the work, humans own the risk.”

The problem is structural. Governance built for chatbot interaction is static. Autonomous agents, by design, make decisions continuously and at volume — often chaining actions across multiple corporate systems in ways that can exceed the access privileges any single human user would be granted. Without operational code enforcing governance at each step of a workflow, the efficiency gains collapse under the weight of unmanaged risk.

Permissions, Zombie Agents, and Budget

The specific risks named are concrete. Agents can accumulate persistent service account credentials, long-lived API tokens, and write access to core file systems. OpenClaw illustrated the tension clearly: it offered a user experience comparable to working with a human assistant, but security experts quickly identified that inexperienced users could be compromised through it. Enterprise IT teams, the report notes, have long absorbed the cost of shadow IT — inheriting systems they didn’t build and cleaning up after them. With autonomous agents, that inherited mess carries materially higher stakes.

The fix proposed is not a policy committee. It is upfront IT budget and labor allocated to central discovery, oversight, and remediation across the full population of employee- and department-created agents — potentially numbering in the thousands within a single organization.

There is also the question of what happens when agents are simply forgotten. The report recounts an example of a consultant who saved a client hundreds of thousands of dollars by identifying and shutting down a “zombie project” — an abandoned AI pilot still running on a GPU cloud instance. That risk, the report warns, could multiply into a zombie fleet across an enterprise.

The direct consequence named in the source is clear: businesses must allocate appropriate IT budget and labor upfront to sustain continuous discovery, oversight, and remediation of agent deployments — or absorb the compounding costs of not doing so.

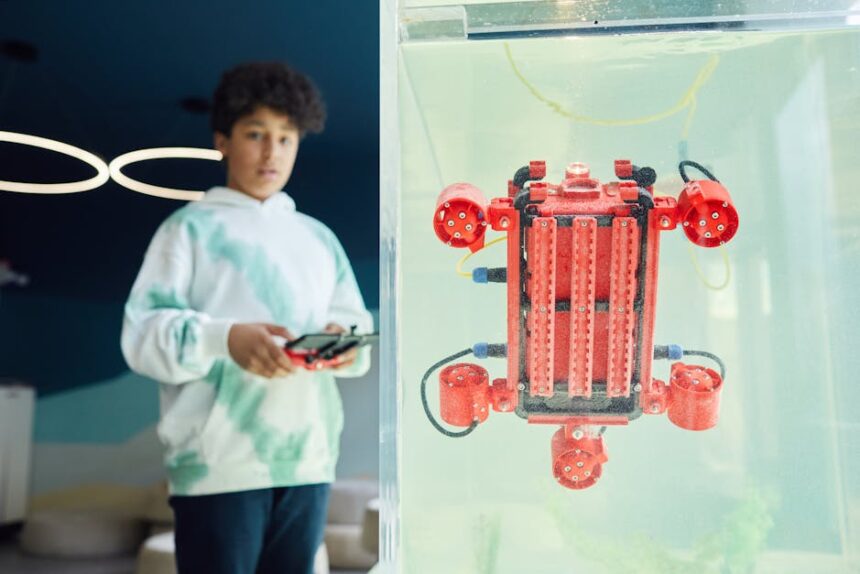

Photo by Vanessa Loring on Pexels

This article is a curated summary based on third-party sources. Source: Read the original article